Basic concepts

Kurzweil

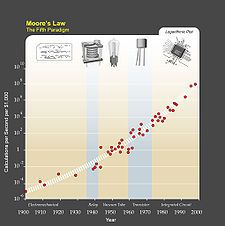

Kurzweil writes that, due to

paradigm shifts, a trend of exponential growth extends

Moore's law from

integrated circuits to earlier

transistors,

vacuum tubes,

relays, and

electromechanical computers. He predicts that the exponential growth will continue, and that in a few decades the computing power of all computers will exceed that of human brains, with superhuman

artificial intelligence appearing around the same time.

Many of the most recognized writers on the singularity, such as

Vernor Vinge and

Ray Kurzweil, define the concept in terms of the technological creation of

superintelligence, and argue that it is difficult or impossible for present-day humans to predict what a post-singularity world would be like, due to the difficulty of imagining the intentions and capabilities of superintelligent entities.

[1][2][3] The term "technological singularity" was originally coined by Vinge, who made an analogy between the breakdown in our ability to predict what would happen after the development of superintelligence and the breakdown of the predictive ability of modern

physics at the

space-time singularity beyond the

event horizon of a

black hole.

[3] Some writers use "the singularity" in a broader way to refer to any radical changes in our society brought about by new technologies such as

molecular nanotechnology,

[4][5][6] although Vinge and other prominent writers specifically state that without superintelligence, such changes would not qualify as a true singularity.

[1] Many writers also tie the singularity to observations of exponential growth in various technologies (with

Moore's Law being the most prominent example), using such observations as a basis for predicting that the singularity is likely to happen sometime within the 21st century.

[5][7]

A technological singularity includes the concept of an intelligence explosion, a term coined in 1965 by

I. J. Good.

[8] Although technological progress has been accelerating, it has been limited by the basic intelligence of the human brain, which has not, according to

Paul R. Ehrlich, changed significantly for millennia.

[9] However with the increasing power of computers and other technologies, it might eventually be possible to build a machine that is more intelligent than humanity.

[10] If superhuman intelligences were invented, either through the

amplification of human intelligence or

artificial intelligence, it would bring to bear greater problem-solving and inventive skills than humans, then it could design a yet more capable machine, or re-write its source code to become more intelligent. This more capable machine then could design a machine of even greater capability. These iterations could accelerate, leading to

recursive self improvement, potentially allowing enormous qualitative change before any upper limits imposed by the laws of physics or theoretical computation set in.

[11][12][13]

The notion of the exponential growth in computing technology suggested by

Moore's Law is commonly cited as a reason to expect a singularity in the relatively near future, and a number of authors have proposed generalizations of Moore's Law, such as computer scientist and futurist

Hans Moravec who in a 1998 book proposed that the exponential growth curve could be extended back through earlier computing technologies prior to the

integrated circuit. Futurist

Ray Kurzweil postulates a

law of accelerating returns in which the speed of technological change (and more generally, all evolutionary processes

[14]) increases exponentially, generalizing Moore's Law in the same manner as Moravec's proposal, and also including material technology (especially as applied to

nanotechnology), medical technology and others.

[15] Like other authors, though, he reserves the term "Singularity" for a rapid increase in

intelligence (as opposed to other technologies), writing for example that "The Singularity will allow us to transcend these limitations of our biological bodies and brains ... There will be no distinction, post-Singularity, between human and machine".

[16] He also defines his predicted date of the singularity (2045) in terms of when he expects computer-based intelligences to significantly exceed the sum total of human brainpower, writing that advances in computing before that date "will not represent the Singularity" because they do "not yet correspond to a profound expansion of our intelligence."

[17]

The term "technological singularity" reflects the idea that such change may happen suddenly, and that it is difficult to predict how such a new world would operate.

[18][19] It is unclear whether an intelligence explosion of this kind would be beneficial or harmful, or even an

existential threat,

[20][21] as the issue has not been dealt with by most

artificial general intelligence researchers, although the topic of

friendly artificial intelligence is investigated by the

Singularity Institute for Artificial Intelligence and the

Future of Humanity Institute.

[18]

Many prominent technologists and academics dispute the plausibility of a technological singularity, including

Jeff Hawkins,

John Holland,

Jaron Lanier, and

Gordon Moore, whose

Moore's Law is often cited in support of the concept.

[22][23]